Watch Instead (Video Overview)

Audio summary: “Is AI writing your date’s messages?” — format: MP4 audio.

AI Chatfishing: How AI Wingmen Are Rewriting Dating App Conversations

You match with someone and the chat works.

Not “fine.” Not “good enough.” It feels unusually clean.

They ask the right questions. They flirt without being gross. They remember what you said yesterday. They respond quickly, but not desperately. They’re warm without being clingy. The pacing is perfect. It’s the kind of conversation that makes you think: Finally. An adult.

It feels like effort.

But there’s a new problem in online dating: the effort might not be theirs.

“Chatfishing” is a cousin of catfishing. Catfishing is about fake identity—fake photos, fake backstory, fake life. Chatfishing targets something more basic: the words. The photos and name can be real, but the messages can be written or heavily shaped by AI instead of typed by the person you matched (Scientific American, BBC).

That changes the risk. Because texting has always been the “proof of work” on dating apps. It wasn’t a guarantee, but it was a signal: time, attention, intent.

Now that signal is getting noisy.

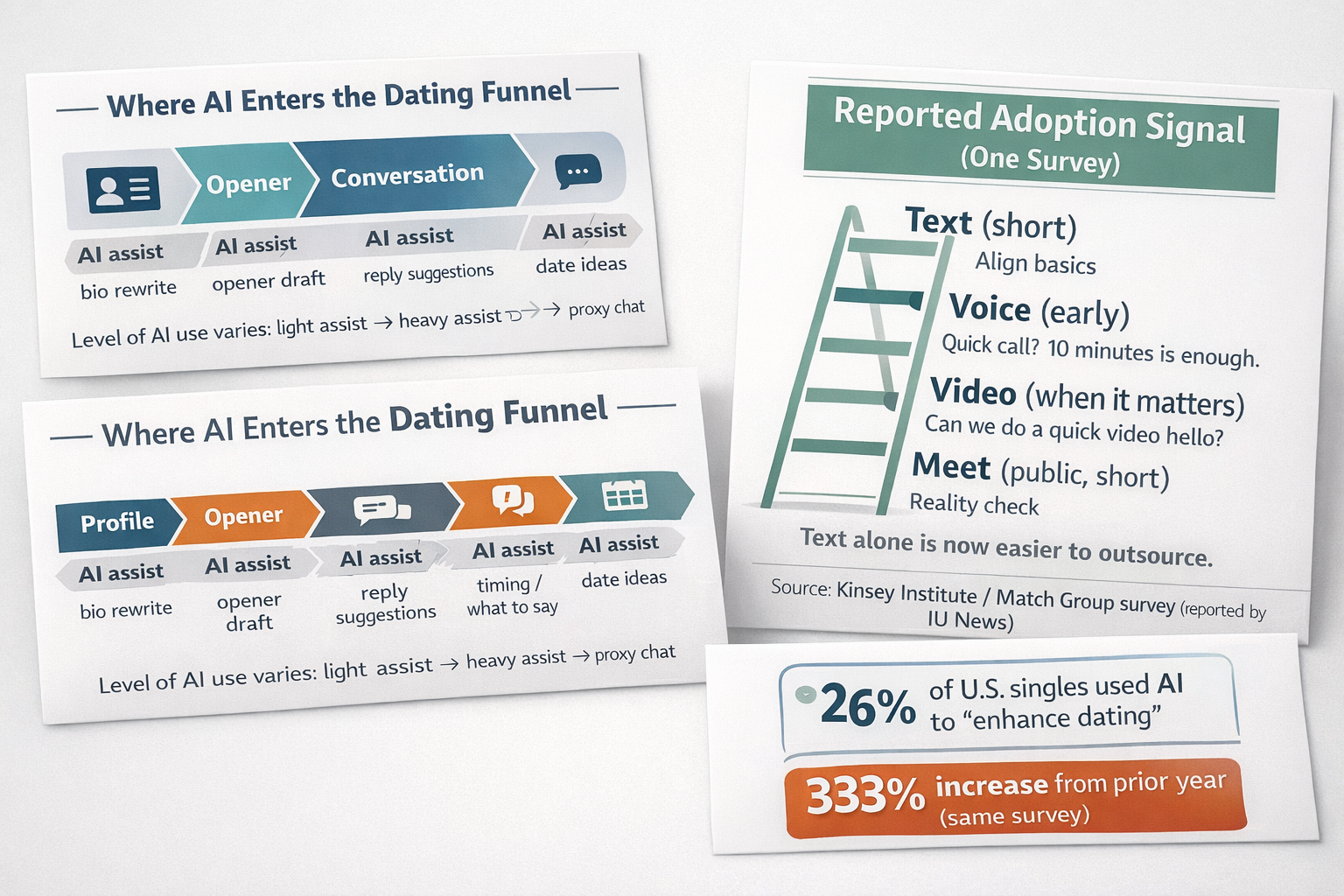

One survey reported that 26% of U.S. singles used AI tools to “enhance dating,” a 333% increase from the prior year (Kinsey Institute / Match Group via IU News).

Even if most of that use is light—just polishing messages instead of fully outsourcing the chat—it creates a new reality:

You can’t assume the person you matched is the person writing.

This post explains what’s happening, how it works, why it matters, and what to do now—without turning dating into a paranoia hobby.

The real fear isn’t “AI.” It’s what AI does to trust signals.

Most dating app advice is the same loop:

- be yourself

- don’t overthink

- move to a date quickly

- don’t get scammed

Still true. But it’s missing the new hazard: AI can manufacture “relationship signals” at near-zero cost.

In the old world, a thoughtful message took effort. Effort did not equal character, but it did prove something simple: a human spent time on you.

In the new world, “thoughtful” can be generated in seconds. The message can sound emotionally intelligent even if the person behind it is tired, distracted, awkward, or not that interested. It can also sound emotionally intelligent if the person behind it is malicious.

That’s not science fiction. It’s the direct outcome of tools that write, re-write, and “coach” conversation in real time.

And here’s what makes it hit harder than normal dating disappointment:

- You don’t just lose time. You can lose weeks.

- You don’t just misread interest. You can misread a whole personality.

- You don’t just feel rejected. You feel tricked, even if nobody intended to trick you.

Dating already has enough uncertainty. Chatfishing adds uncertainty to the one thing that used to be the baseline: “At least this person wrote these words.”

What’s changing (and why it matters)

AI use in dating sits on a spectrum.

At one end, it looks harmless: rewriting a bio, fixing grammar, calming anxiety, generating a few openers. At the other end, it becomes “proxy dating,” where an AI does most of the conversation and the human mostly supervises. There have even been experiments where AI “clones” flirt with other AI “clones” before humans step in (Scientific American).

That spectrum matters because the risk changes as AI moves deeper into the relationship-building stage.

If someone uses AI to make their bio less awkward, you can survive that. If someone uses AI to run weeks of emotional conversation, the cost is different. You can build attachment to a communication style they can’t sustain without help. You can invest in a version of them that isn’t real offline.

This is not only about scams. Honest people use AI too—sometimes to reduce anxiety, sometimes to save time, sometimes to compete.

The problem is not “AI is evil.” The problem is that the dating environment rewards the appearance of skill more than the reality of skill—and AI makes that appearance cheap.

If you want a simple way to think about it:

AI turns “effort” into “output.”

And relationships are built on the assumption that output came from effort.

When that assumption breaks, you need a new protocol.

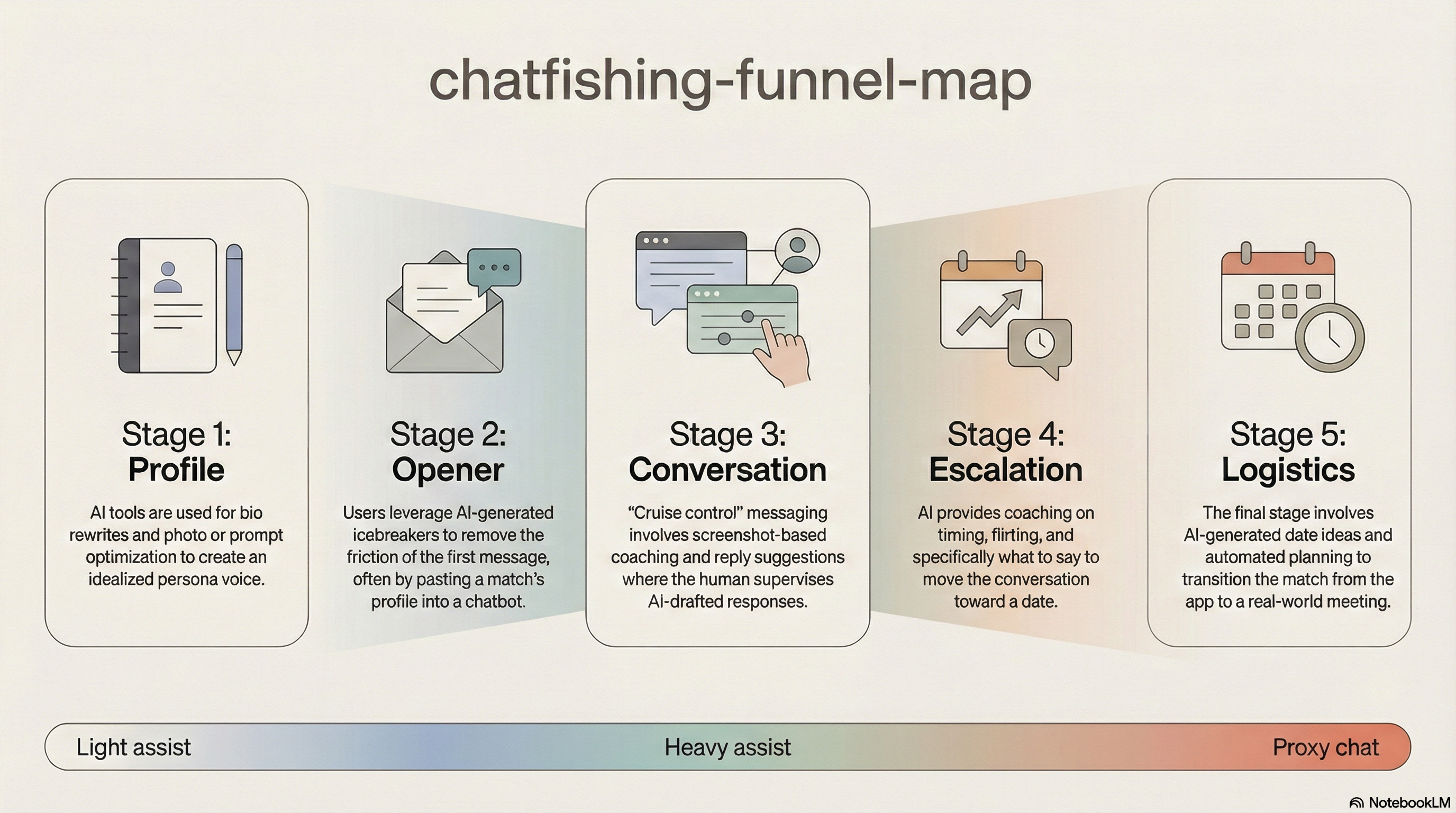

The AI wingman pipeline: how proxy dating works

Before you can defend yourself, you need a clear picture of where the AI enters the process.

1) Profile optimization: the first mask goes on before you meet

Some major platforms and companies have discussed or tested AI features that help with photos, prompts, and conversation help (Business Insider). Third-party tools also help users rewrite bios, choose photos, and craft prompt answers.

That’s not inherently deceptive. People have always tried to present well. But AI can shift someone’s “voice” in a way that isn’t just polished—it can be different.

The risk isn’t that the profile is fake. The risk is that the profile becomes a curated persona that sets a communication expectation the person can’t meet later.

Translation: you match with someone’s best possible copywriter.

2) AI openers: the “first message” no longer proves interest

The opener has always been a friction point. AI removes that friction. People paste a match’s profile into a chatbot and ask for a clever first message (Scientific American).

In the old world, a clever opener meant: “This person tried.”

In the new world, it might mean: “This person has a tool.”

That changes how much meaning you can safely attach to early charm.

3) “Cruise control” conversation: the danger zone for emotional attachment

This is where chatfishing becomes relationship-relevant.

Reporting describes “AI wingman” tools that analyze chat context and propose ready-to-send replies, sometimes via screenshots: you upload the conversation, the tool generates a response, you send it (Business Insider). At minimum, people keep a chatbot open in a second window and ask what to say next.

This can look like “just getting help,” but it can also become a pattern: every message routed through an assistant. The other person experiences a steady stream of well-paced, emotionally tuned replies and assumes that’s who they’re talking to.

Risk: you are building rapport with an output system, not the person’s natural communication style.

If you’ve ever had a first date where the person felt like a different human than the one you texted, you already understand the problem. AI increases that gap.

4) Escalation coaching: turning intimacy into a scripted sequence

Some users ask AI when to escalate: when to flirt, when to ask for a number, when to suggest meeting, what to say if there’s silence (Western University).

Again, this can help anxious people. It can also turn courtship into a flowchart where the “right words” matter more than real comfort and intent.

Risk: chemistry feels real in text, then collapses in voice or in person.

5) Logistics and date planning: helpful, but also a way to stay in the bubble

Platforms have discussed AI that suggests date ideas or helps users move from chat to meeting (Business Insider). That can be useful. It can also keep people in the “chat phase” longer if the system keeps the conversation going.

Risk: more time in the message bubble where emotion grows faster than reality.

The dark mirror: scams use the same mechanism (and they have stronger incentives)

Here’s the uncomfortable part: romance scammers adopt anything that scales trust.

Research on romance-baiting scams reported that LLM-driven agents achieved higher compliance than human operators in a controlled setting (46% vs 18%) (arXiv preprint, Help Net Security summary).

Important: this is scam research, not proof that AI improves healthy dating outcomes.

But it matters because it shows something simple and dangerous:

AI can produce convincing warmth and consistency at scale.

That makes the “is this real?” problem worse even for honest users. Scammers will use the same tools, but with clearer goals: money, access, control.

So the safety rule is not “assume everyone is a scam.”

The safety rule is: don’t let text-only intimacy run for weeks.

Not in 2026.

Why you can’t rely on “spotting AI” in messages

People want a trick: “How do I tell if they’re using AI?”

There is no reliable single test in text.

AI text detectors aren’t dependable enough

Testing shows AI-content detectors can be inconsistent, especially with short text, casual language, and mixed authorship (human + AI edits) (ZDNet, Effortless Academic). In dating chats, those conditions are normal.

So if your plan is “I’ll run their texts through a detector,” that’s not a safety strategy. That’s a confidence generator that can be wrong.

Platform safety systems have blind spots

Moderation tools are built to catch harassment, spam patterns, and obvious fraud. AI-written romance chat often looks like “good behavior”: supportive, polite, consistent. That style can slip past systems tuned to catch hostility or known scam templates (arXiv preprint).

Humans are easier to fool than they think

Even when users know chatfishing exists, they often don’t recognize it in real conversations (Scientific American). The tone is plausible. The emotional pacing is smooth. That’s the point.

Bottom line: stop looking for perfect detection. Switch to verification.

The trust problem: what’s real, what’s performance, what’s outsourced?

Dating apps run on trust signals. Text effort used to be one of them.

Now that AI can create “effort-like” text instantly, the signal is weaker. That doesn’t mean trust is dead. It means trust has to move to stronger signals earlier.

This is the same shift you see in security: when passwords fail, you add MFA. When text becomes ambiguous, you add voice and video.

Not because it’s perfect—because it raises the cost of deception and reduces time-waste.

You don’t need to become cynical. You need to become procedural.

What to do now: The Trust Ladder protocol

The goal is not to “catch” people. It’s to stop wasting time and emotional energy.

Think of the Trust Ladder like this: as your investment rises, verification rises.

Step 1: Text (short)

Use text to confirm basics: intent, location, timeline. Keep it short. Don’t start building a deep emotional bond with someone you have not verified.

Signals (not proof, just signals) that you should escalate faster:

- perfectly smooth replies with zero personal texture

- high emotional intensity early

- dodging simple specifics (schedule, rough area, realistic details)

Step 2: Voice (early)

Move to a short voice call before you invest.

Script:

“Quick call? I’ve learned texting can be misleading. Ten minutes is enough.”

A voice call isn’t a character test. It’s a reality test.

Step 3: Video (when it matters)

If the conversation is getting daily and emotionally meaningful, video becomes the next checkpoint.

Script:

“I don’t want to build momentum on text alone. Can we do a quick video hello?”

If someone wants weeks of daily texting but won’t do a 5–10 minute video call, treat that as meaningful risk.

Step 4: Real-world plan (fast, safe, public)

Keep it short and low-stakes.

Script:

“Let’s do a 30-minute coffee this week. If it’s good, we can extend.”

Experts commonly recommend moving to voice/video earlier as a practical verification step because text alone is now too easy to fake or outsource (BBC, Western University).

How to handle “AI help” without turning it into a war

At some point, you may run into someone who says, “Of course I use AI. Everyone does.”

You don’t need to moralize. You need boundaries.

A clean boundary looks like this:

- Light help can be fine (fixing grammar, reducing anxiety).

- Proxy dating is not (outsourcing the relationship-building).

Ask directly, without accusation:

“Do you use AI for messages? I’m fine with small help. I just don’t want to bond with an assistant.”

If they get angry, defensive, or refuse basic verification (voice/video), you have your answer. You’re not negotiating their feelings. You’re protecting your time.

Checklist: AI dating safety in 10 minutes

Use bullets here because this is the part people actually save.

Before you invest emotionally:

- Keep early texting short and practical.

- Move to voice within 3–7 days (or sooner if intensity rises).

- Move to video before prolonged daily messaging.

- Verify basic specifics: general schedule, rough area, realistic details.

- Avoid sending intimate photos or sensitive personal info early.

- Treat refusal to verify as a meaningful signal.

If you suspect scams:

- Stop sending money, gift cards, or “help.”

- Screenshot everything.

- Report on-platform.

- Tell a friend what’s happening (scams thrive in secrecy).

The 7 / 30 / 90-day plan

Next 7 days: stop the time-waste

- Move active conversations onto the Trust Ladder.

- Set a rule: no deep intimacy without voice/video.

- Clean your profile: remove details that make social engineering easy (workplace specifics, family names, exact routines).

Next 30 days: rebuild your dating system

- Write one “verification script” you always use (voice + video).

- Decide what you will not do in text (heavy emotional processing, sexting, financial talk).

- Identify your top 3 dealbreakers early so AI-polished chats don’t distract you.

Next 90 days: raise your standards

- Track outcomes: how often “great texting” becomes “weak real-life connection.”

- Prioritize in-person compatibility over message performance.

- Build a repeatable process so dating apps don’t become emotional casinos.

Claims & Verification

What we can safely claim (from the sources):

- Chatfishing describes AI-assisted romantic messaging that may be undisclosed (Scientific American, BBC).

- Survey data reported rapid growth in AI use to “enhance dating” (26% and 333% increase year-over-year in that survey) (Kinsey Institute / Match Group via IU News).

- Major companies and platforms have discussed or piloted AI features for photos, profiles, and conversation help (Business Insider).

- AI-assisted scam research shows LLM agents can be highly persuasive in romance-baiting contexts (arXiv, Help Net Security).

- AI text detection tools can be inconsistent, especially in short-form contexts (ZDNet, Effortless Academic).

- Experts commonly recommend earlier escalation to voice/video as a practical verification approach (BBC, Western University).

What’s uncertain:

- How many mainstream dating conversations are “mostly AI” vs “lightly assisted.” Many surveys do not separate levels cleanly (Scientific American).

- Whether AI assistance improves long-term relationship outcomes; there is limited longitudinal evidence (Western University).

- How disclosure rules and labeling will work inside private chats when AI is used as a tool by a human (see EU AI transparency policy discussions: EU Digital Strategy).

- Whether detection and watermarking will become reliable for short messages as models evolve (ZDNet).