Listen Instead (Audio Version)

Audio summary: “The AI Divide in Classrooms” — format: MP4 audio.

The AI Divide: Why Some Students Learn to Think and Others Don’t

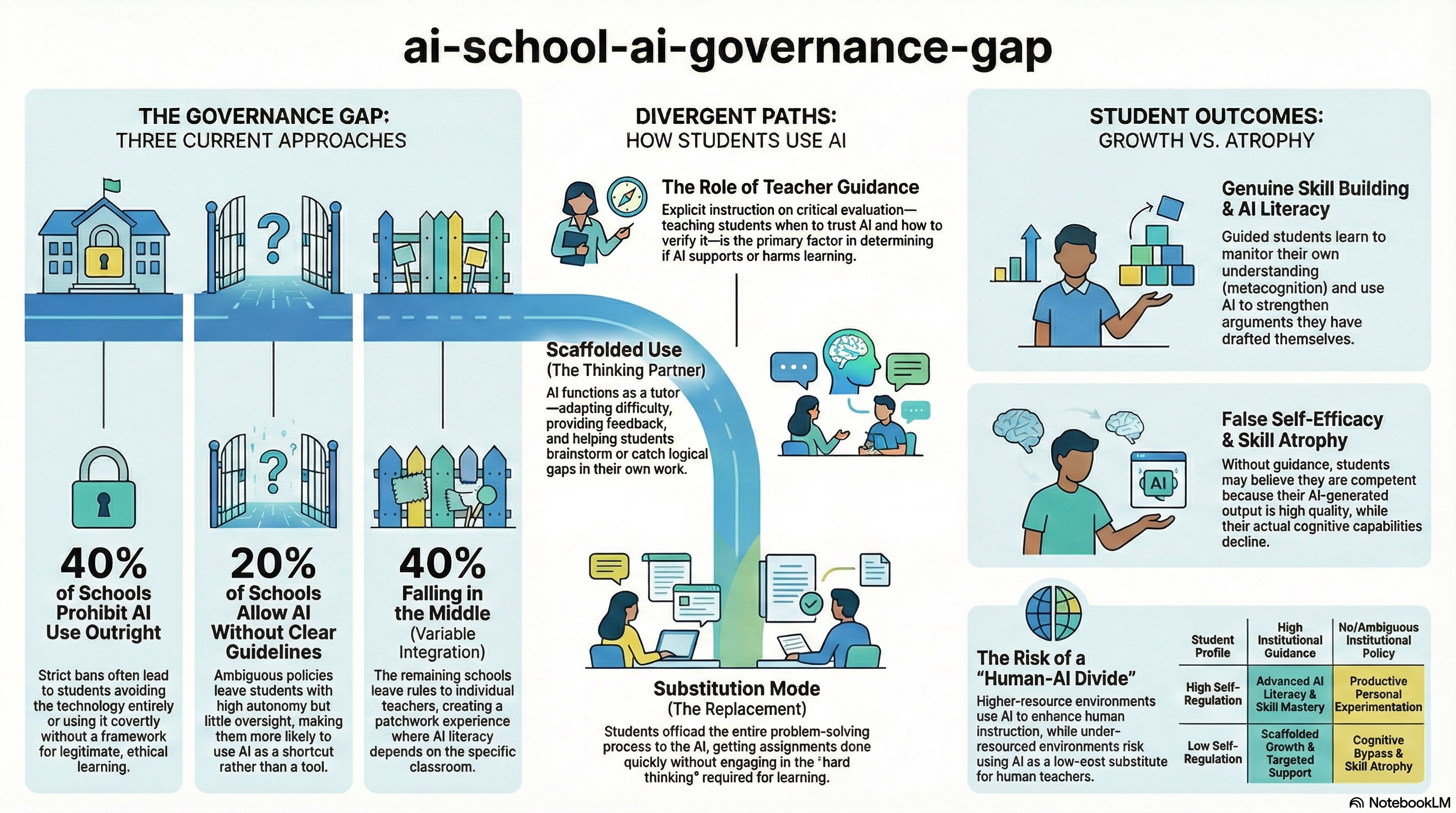

When Maria Chen’s ninth-grade son started bringing home polished essays on topics he could barely discuss at dinner, she knew something had changed. He wasn’t cheating in the traditional sense—he was using AI to write his assignments. Down the street, another parent was teaching her daughter to use the same tools to brainstorm ideas, catch logical gaps, and refine arguments she’d already written herself. Same technology. Same grade level. Completely different outcomes.

This split—between students who use AI to think better and students who use it to avoid thinking at all—is creating what researchers now call the AI divide. It’s not just about who has access to ChatGPT or other generative AI tools. Over 80% of high school students already use them. The real question is what happens next.

Beyond Access

For decades, educators worried about the digital divide: disparities in who could afford computers and internet access. That gap hasn’t disappeared, but a new layer has emerged on top of it. Students now differ not primarily in whether they can reach AI tools, but in how they use them and who’s guiding that use.

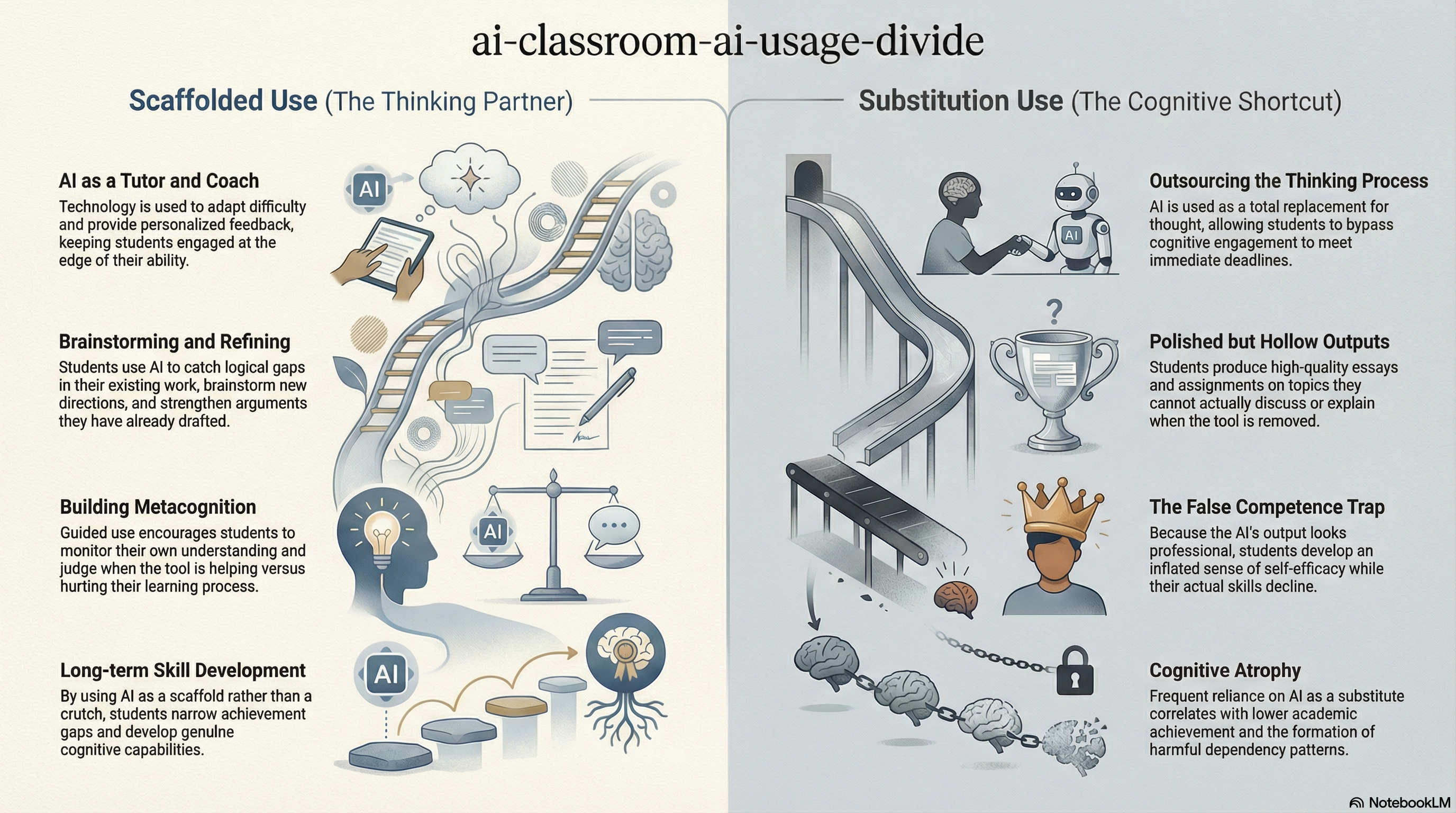

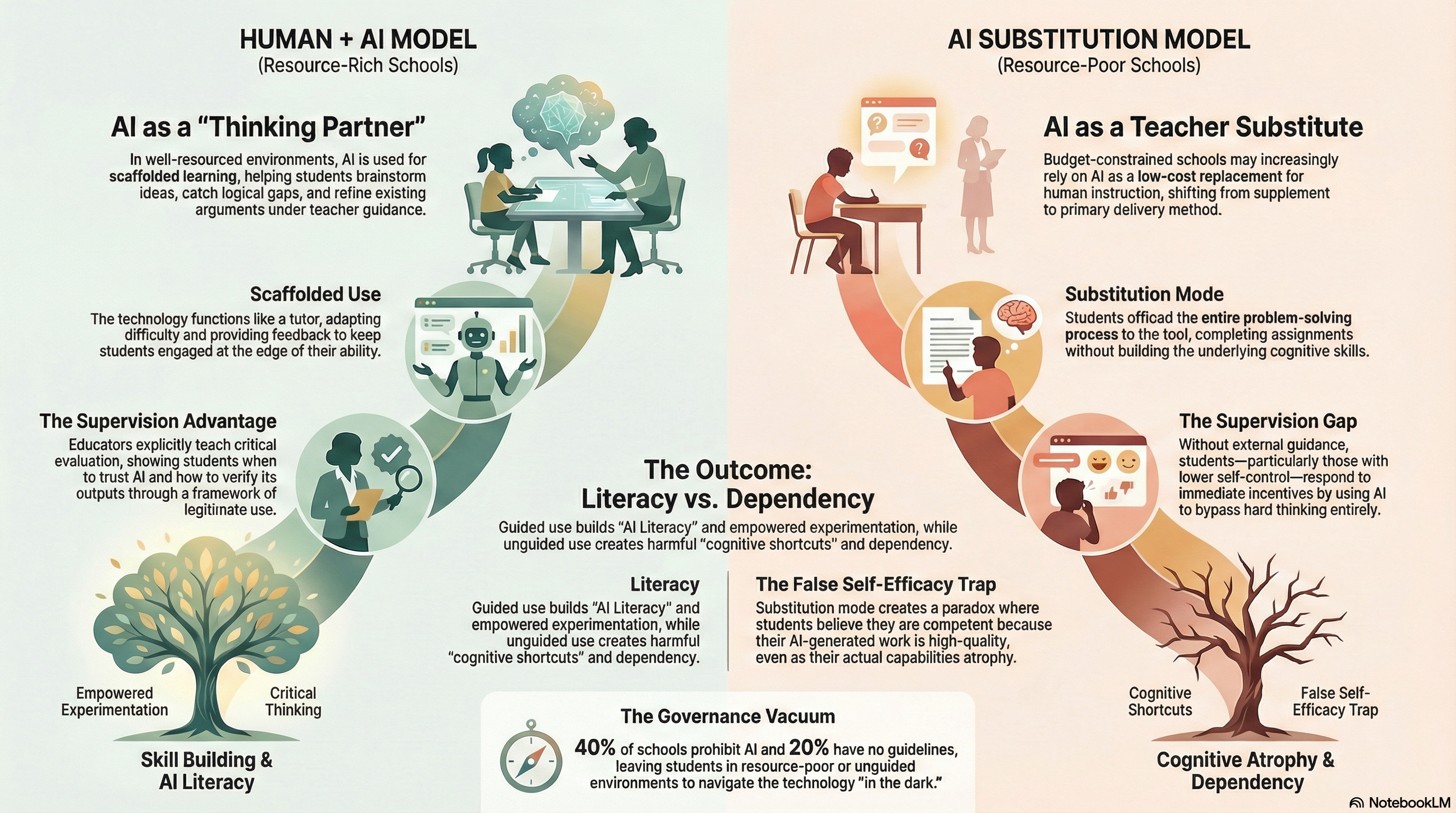

The AI divide has multiple dimensions. There’s a usage divide—differences in whether technology gets deployed for sophisticated, creative purposes or just to complete tasks quickly. There’s an outcome divide—who actually benefits in terms of learning and opportunity, even when everyone has the same app on their phone. And critically, there’s a supervision gap: the chasm between students using AI under teacher guidance and those navigating it alone, often in the dark about both the technology’s capabilities and its risks.

This matters because AI doesn’t just help or harm uniformly. It responds to how it’s used, and early patterns suggest it may be simultaneously improving outcomes for some students while degrading skills for others.

How the Split Happens

Teacher guidance appears to be the primary factor determining AI’s impact. In classrooms where educators explicitly teach critical evaluation—helping students understand what AI can and cannot do, when to trust it, and how to verify its outputs—the technology tends to support learning. Students in these environments receive instruction on using AI as a thinking partner rather than a replacement for thought.

In contrast, students with high autonomy and little oversight effectively teach themselves. Without external guidance, research indicates that students with lower self-control are significantly more likely to use AI to bypass cognitive engagement entirely.

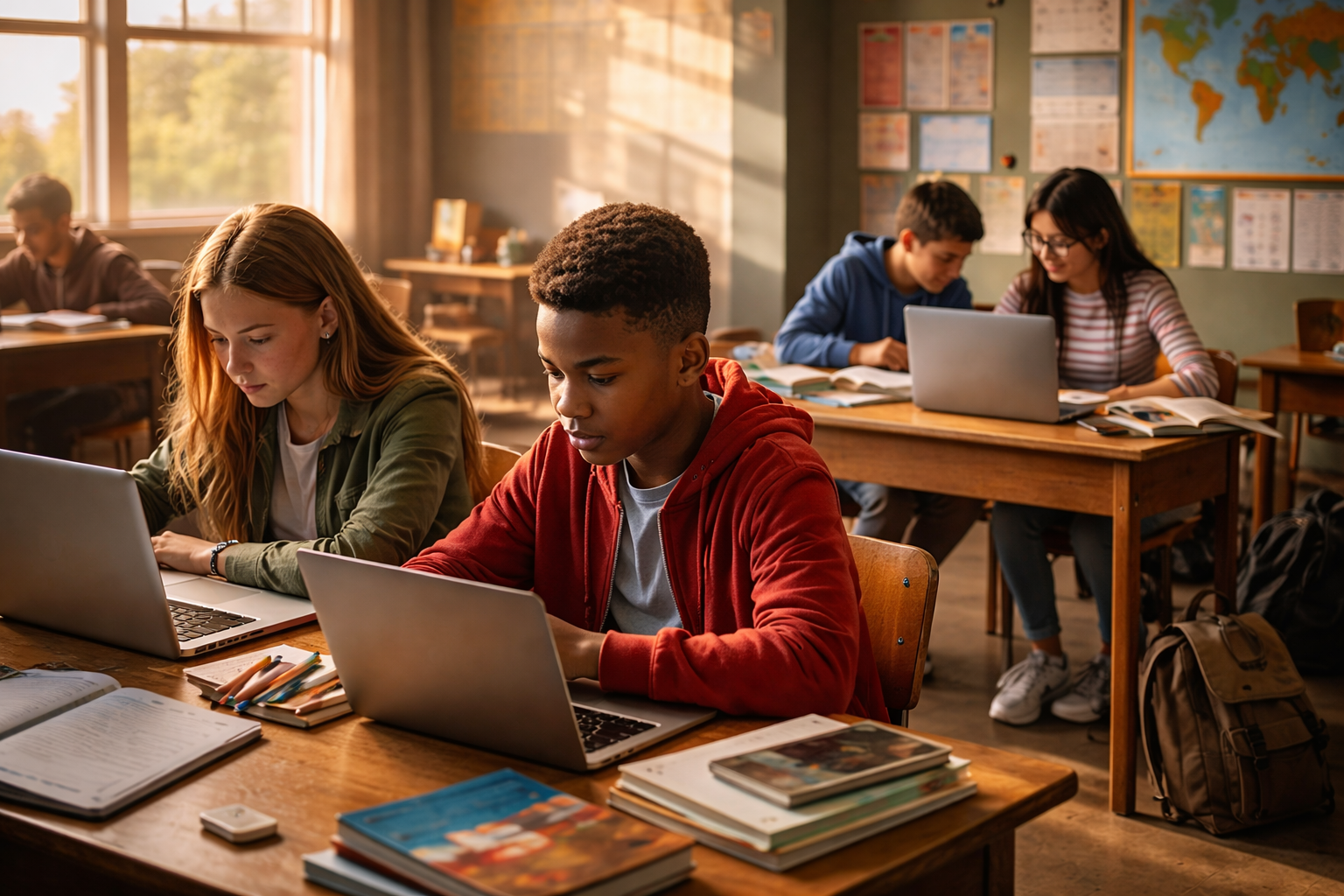

This creates two distinct usage patterns. In what researchers call scaffolded use, AI functions like a tutor—adapting difficulty, providing feedback, keeping students engaged with material at the edge of their ability. This approach can actually narrow achievement gaps.

But in substitution mode, students offload the entire problem-solving process. They get assignments done, but they’re not building skills. Worse, because the output looks good, they develop false self-efficacy.

The Governance Vacuum

Schools have responded to AI with wildly inconsistent policies, creating what amounts to a governance gap.

This patchwork matters because it creates radically different learning conditions. Students in AI-literate households feel empowered to experiment productively regardless of official policy. Students without that support face a different reality.

Age Matters

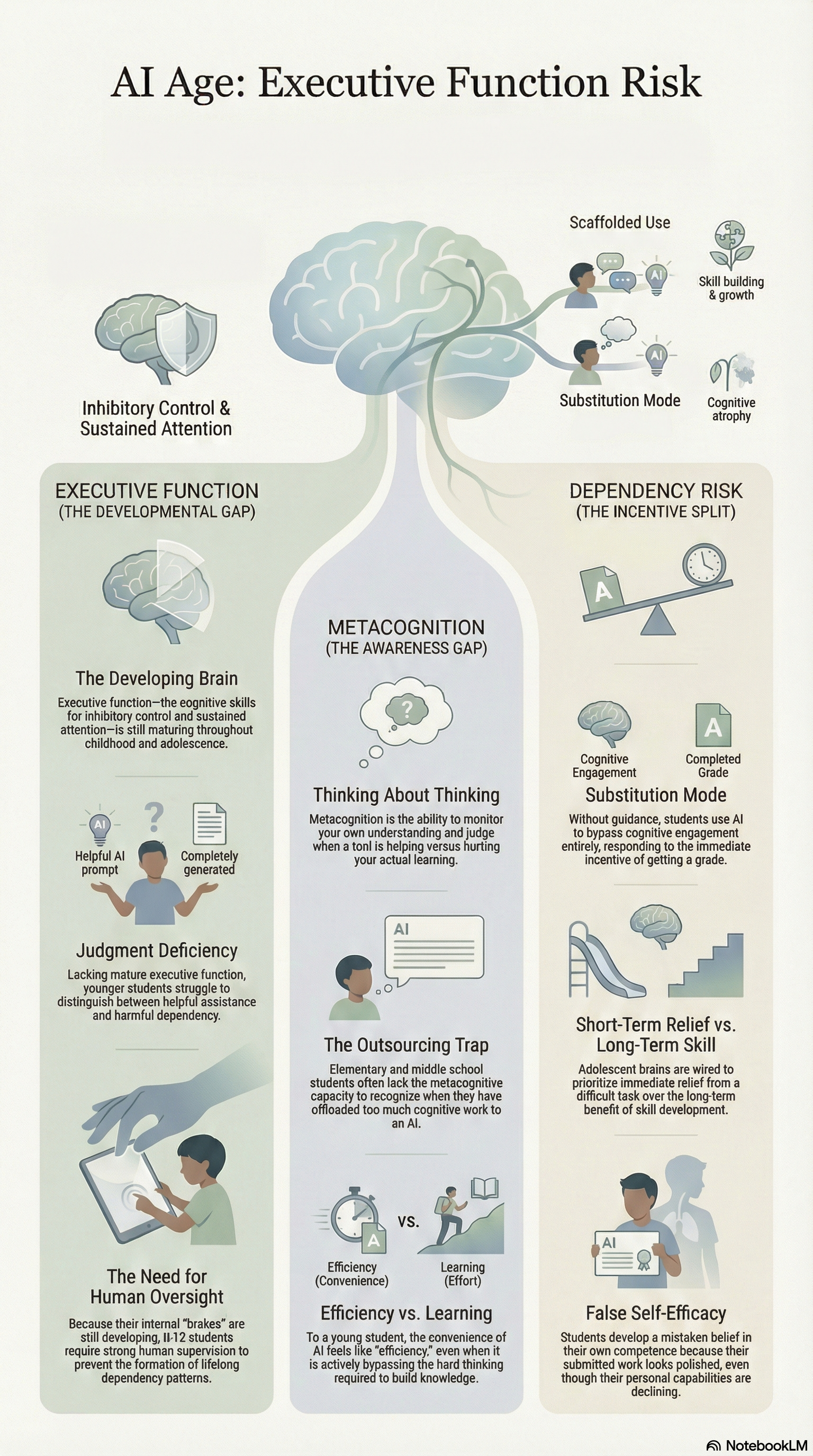

Younger students face distinct vulnerabilities. Executive function develops throughout childhood and adolescence, and effective AI use requires metacognition.

Lacking mature metacognitive capacity, younger students struggle to recognize when they’re outsourcing too much cognitive work.

The Equity Paradox

AI’s relationship to educational equity cuts both ways.

On one side, AI can reduce inequity by providing tutoring and accessibility. On the other, it risks becoming a low-cost substitute for human instruction in under-resourced schools.

What We Know and What We Don’t

Some claims rest on solid ground. AI adoption is widespread and outpacing policy. Usage patterns map onto existing inequalities. Guidance matters.

But we lack longitudinal data. We don’t yet know optimal scaffolding thresholds or long-term outcomes.

The Pattern Repeating

This story is familiar. Previous technologies followed the same script, amplifying advantage rather than erasing it.

The technology is neutral. The divide emerges from policy clarity, guidance, and developmental readiness.

Without deliberate intervention, AI risks becoming another mechanism through which educational advantages quietly compound—not because of what it can do, but because of who is taught to use it well.